Scope/Description

This article will explain the steps to begin configuring the network for a new Ceph cluster. It will explain how to properly cable each storage node and gateway system for best practices.

Assumptions:

- A 5 node cluster with 2 filesystem gateways will be used as an example

- 2 networks will be used, a cluster and a public network.

- Built on 10Gigabit architecture

Prerequisites

- Read this article on Ceph Recommended Network Configuration/

- Access to the physical hardware you will be configuring

- Access to IPMI remote management:

Steps

Physical Configuration

- Create a network wiring diagram or schematic illustrating the end goal of the network configuration. This can be a simple sketch.

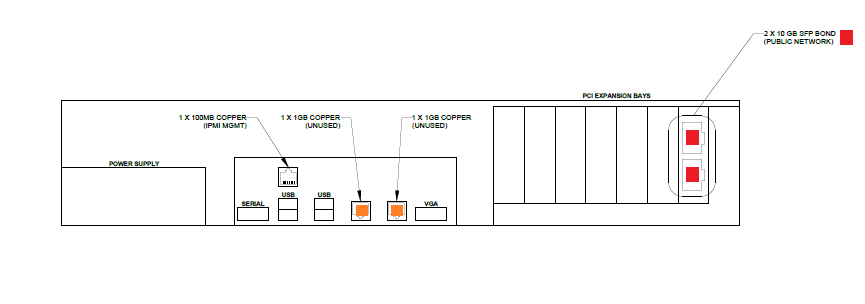

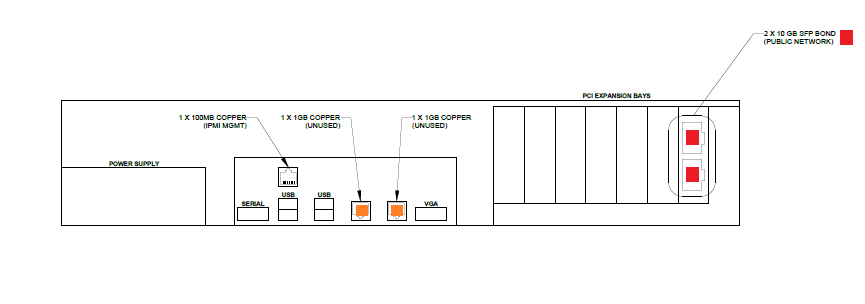

- Cable systems according to the diagram below. the 1G management network is NOT a requirement

- Storinator:

- Ensure proper cabling to the switch/switches. Configuration could contain a single switch for public and cluster network (Switch VLAN Required) or a switch for each network. High Availability network switches can also be configured.

- Once cabling is complete ensure all cables are connected and link lights are on.

Software Configuration

- Login to the shell via IPMI to begin configuring the network

- Ensure all interfaces plugged in are connected and "UP"

- It is important to determine the correct interface for the specified port. This can be completed by mac address, however typically the top interface ends in an "0" and the bottom interface ends in a "1". This will allow us to determine what interfaces should be with what bond.

- Configure Network Bonds: KB Article: http://knowledgebase.45drives.com/kb/kb450130-single-server-linux-network-bonding-setup/

- Recommended bonding protocols:

- LACP -switch support required

- Active-Backup - switch support not required

- Adaptive-Load Balancing - switch support not required

Verification

- Verify that every system can ping each other on both the public AND private network WITHOUT any packet loss. EXAMPLE:

Troubleshooting

- Check that all interfaces are UP in the bond with ip a

- Verify that all link lights are no

- Verify interfaces are at the correct speed

- Check physical connections

- Check bond configuration for errors

- Verify that the default gateway set for public network